Introduction

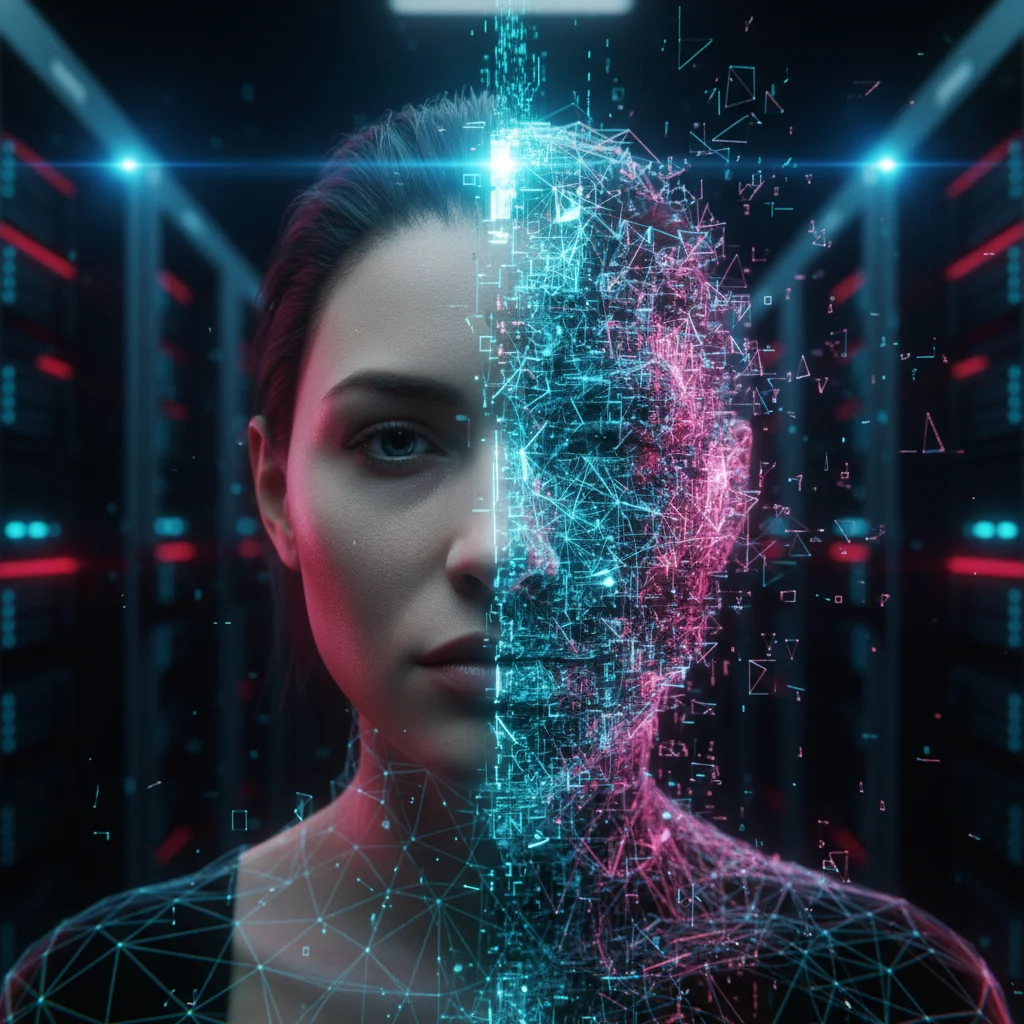

The digital landscape has transformed at an unprecedented pace, fundamentally altering how organizations communicate, authenticate identities, and protect sensitive data. As we move further into the decade, technological advancements have brought forth new vulnerabilities, making Deepfake Security Risks in 2026 a primary concern for executives and IT professionals alike. What was once considered a niche technological parlor trick has now evolved into a sophisticated, scalable vector for cyberattacks. The ability of artificial intelligence to generate hyper-realistic audio, video, and imagery has blurred the lines between reality and fabrication. Consequently, understanding Deepfake Security Risks in 2026 is no longer optional; it is an absolute necessity for any business operating in the modern digital economy.

The sophistication of generative AI models means that threat actors no longer require massive resources or specialized expertise to create convincing synthetic media. Deepfake-as-a-service platforms have proliferated on the dark web, lowering the barrier to entry for cybercriminals. This democratization of malicious technology means that organizations of all sizes face an escalated threat level. From multi-national corporations to regional small and medium-sized enterprises, navigating Deepfake Security Risks in 2026 requires a proactive, multi-layered defense strategy. As malicious actors use these tools for everything from executive impersonation to elaborate phishing schemes, businesses must continuously update their security protocols to stay ahead of the curve.

The Evolution of Synthetic Media Threats

In the past few years, the evolution of synthetic media has been staggering. While earlier iterations of deepfakes often contained telltale signs of manipulation—such as unnatural blinking patterns or distorted audio artifacts—current models can bypass robust biometric security checks with alarming success. As highlighted by cybersecurity experts at CSO Online, deepfakes are no longer a hypothetical future risk; they have become powerful enablers of financial fraud and reputational damage. The realization of Deepfake Security Risks in 2026 means that businesses can no longer rely solely on the traditional familiar voice test or unauthenticated video calls. Threat actors are now exploiting human trust, creating highly personalized and urgent scenarios that trick employees into authorizing fraudulent wire transfers or handing over sensitive corporate credentials.

This shift from purely technical exploitation to human trust exploitation requires a fundamental change in how companies approach security. Traditional firewalls and antivirus software are insufficient against social engineering attacks powered by synthetic media. Addressing Deepfake Security Risks in 2026 involves implementing zero-trust architectures, continuous authentication frameworks, and rigorous employee training programs. Furthermore, the integration of AI in business processes poses broader questions about the workforce. For instance, as AI systems take over more automated tasks, professionals often wonder about the human element in various sectors, leading to inquiries such as Will AI Replace Recruiters? Recruitment in the Age of AI. Just as the recruitment industry must adapt to AI’s capabilities without losing human intuition, cybersecurity must combine advanced detection algorithms with critical human oversight.

Why Immediate Action is Required

The financial and reputational stakes have never been higher. The surge in AI-driven identity theft and real-time financial fraud highlights exactly why Deepfake Security Risks in 2026 demand immediate action. Malicious campaigns are not only designed to steal money but also to manipulate stock prices, damage brand reputations, and disrupt internal operations. An unverified deepfake audio clip purportedly from a CEO could lead to disastrous market consequences within minutes.

To mitigate these severe outcomes, organizations must transition from reactive postures to proactive monitoring and prevention. Evaluating Deepfake Security Risks in 2026 requires cross-functional collaboration, ensuring that IT, human resources, legal, and public relations departments are aligned in their incident response strategies. Business leaders should consider the following foundational steps:

- Deploying advanced liveness detection and biometric validation systems to intercept synthetic impersonations.

- Establishing zero-trust communication channels for all executive and financial requests.

- Conducting regular, up-to-date employee awareness training on the latest synthetic media threats.

These measures form the bedrock of a robust security posture. By acknowledging the pervasive nature of these threats, organizations can implement a structured approach to defense:

- Assess current vulnerabilities within identity verification processes.

- Integrate AI-enabled monitoring protocols to flag anomalous internal and external communications.

- Develop comprehensive incident response plans specifically tailored to active deepfake scenarios.

As we delve deeper into the specific mechanisms and impacts of synthetic media, it becomes clear that awareness is just the first step. Effectively managing Deepfake Security Risks in 2026 will dictate the resilience and trustworthiness of organizations worldwide. In the following sections, we will explore the precise nature of these threats, the industries most at risk, and the actionable strategies you can implement to fortify your defenses against the impending wave of AI-generated cyberattacks.

The Evolution of Deepfake Technology by 2026

The landscape of Deepfake Security Risks in 2026 has drastically shifted from a theoretical concern into an everyday operational hazard for organizations worldwide. Just a few years ago, generating a convincing synthetic video required extensive computational resources, large datasets, and highly specialized technical knowledge. Today, the rapid advancement of Generative Adversarial Networks (GANs) and advanced transformer models has democratized this technology. Malicious actors no longer need Hollywood-level visual effects teams; they can seamlessly swap faces, clone voices, and fabricate entire digital identities using accessible, low-cost artificial intelligence platforms. Because these systems learn to recreate human micro-expressions and precise vocal intonations with startling accuracy, understanding Deepfake Security Risks in 2026 is critical for any enterprise that relies on remote communication and digital trust.

The Rise of Deepfake-as-a-Service (DaaS)

One of the primary catalysts for Deepfake Security Risks in 2026 is the alarming emergence of Deepfake-as-a-Service (DaaS) within the cybercriminal underground. These illicit platforms operate on subscription models, offering plug-and-play interfaces that allow even novice scammers to generate hyper-realistic audio and video in mere seconds. This commercialization of malicious AI has severely lowered the barrier to entry for executing sophisticated social engineering campaigns.

Human resources and financial departments are among the most frequently targeted sectors. When organizations look to upgrade their internal infrastructure, they must factor in these advanced threats. For example, when evaluating What HR Tools Benefit Malaysian Ecommerce in 2026?, companies are realizing that modern platforms must include robust identity verification, biometric liveness checks, and AI-driven deepfake detection mechanisms just to securely onboard new employees. The failure to deploy these safeguards exposes companies to synthetic applicant fraud, where cybercriminals secure remote jobs to infiltrate corporate networks from the inside.

From Isolated Scams to Hyper-Realistic Corporate Phishing

The transition from simple email spoofing to live-injected video and audio manipulation highlights the sheer scale of Deepfake Security Risks in 2026. Attackers are routinely bypassing traditional biometric authentications by utilizing virtual camera injections during live video calls. The infamous case of a multinational firm losing $25 million after an employee was deceived by a deepfaked video conference featuring the company’s CFO remains a stark warning. This incident proved that seeing is no longer believing.

According to comprehensive threat intelligence and statistics published by ZeroThreat, cybercriminals are increasingly merging AI voice cloning with personalized phishing tactics. These multi-layered attacks exploit the psychological triggers of urgency and authority, bypassing standard email filters and deceiving highly trained professionals. As a result, mitigating Deepfake Security Risks in 2026 requires organizations to abandon their reliance on single-signal defenses and embrace multi-layered identity intelligence that analyzes metadata, network context, and device health simultaneously.

Mitigating Vulnerabilities in the Post-Truth Era

Addressing and evaluating the total impact of Deepfake Security Risks in 2026 demands a proactive, defense-in-depth strategy. Companies can no longer depend solely on human perception to catch fraudulent communications. A comprehensive security framework should include several critical elements:

- Out-of-Band Verification: Implementing strict protocols where any sensitive request or financial transfer initiated via video or audio is verified through a secondary, independent communication channel.

- Advanced Detection Tools: Utilizing enterprise-grade platforms that analyze real-time video feeds for inconsistencies in lighting, pixelation, or mismatched audio-visual synchronization.

- Continuous Employee Training: Updating security awareness programs to simulate AI-generated phishing and vishing attempts, ensuring staff recognize the latest manipulation techniques.

- Cryptographic Authentication: Adopting zero-trust architecture and endpoint security to verify the underlying device and connection integrity rather than relying solely on the visual output.

Ultimately, overcoming Deepfake Security Risks in 2026 requires continuous adaptation. As the tools used to create synthetic media become more autonomous and refined, defensive technologies and corporate policies must evolve at an equally rapid pace. In the following section, we will delve deeper into the specific impacts these AI-generated threats have on global financial systems and outline industry-specific compliance requirements that are shaping the future of digital defense.

Financial Fraud and AI Voice Cloning Scams

As cybercriminals adopt hyper-realistic synthetic media, the financial sector has found itself squarely in the crosshairs of unprecedented attacks. While visual manipulation often captures the public imagination, audio spoofing has emerged as the most insidious threat to institutional wealth. Discussing Deepfake Security Risks in 2026 requires a focused look at how voice cloning technologies are driving massive spikes in corporate wire fraud and business email compromise (BEC). By weaponizing trust, attackers can bypass traditional verification protocols simply by imitating the vocal cadence of a CEO, an executive, or a trusted vendor.

The Mechanics of Voice Cloning in Corporate Deception

The science behind synthetic voice generation has advanced so rapidly that malicious actors now require only seconds of audio—scraped from a podcast, an earnings call, or social media—to train a generative adversarial network (GAN). Once the model is trained, it can synthesize new sentences with accurate emotional inflection in real time. This frictionless capability is the primary engine behind Deepfake Security Risks in 2026. A stark example of this threat in action is the well-documented case of a multinational engineering firm that lost over $25 million after a finance worker was tricked by a deepfake video and voice clone of the company’s chief financial officer.

Today, attackers are no longer limited to individual targeting. They are launching automated, scalable campaigns against help desks and financial departments. According to recent threat intelligence, vishing and AI voice cloning attacks target businesses with increasing frequency, leveraging sophisticated narratives to reset credentials or authorize urgent fund transfers. These hyper-realistic attacks highlight why organizations must rethink their entire approach to identity verification.

High-Targeting BEC and the Automation of Vishing

By coupling deepfake audio with compromised email accounts, criminals have turbocharged BEC scams. In these scenarios, an employee receives an email from an executive requesting a wire transfer, followed almost immediately by a phone call from that exact executive to confirm the transaction. The psychological pressure of hearing a superior’s voice makes it highly unlikely that the employee will question the directive. Consequently, mitigating Deepfake Security Risks in 2026 means acknowledging that the human ear is no longer a reliable arbiter of truth in corporate environments.

The global impact of these technologies is reshaping regional financial hubs as well. For instance, banking institutions in Southeast Asia are aggressively updating their risk frameworks. Professionals looking into How Do AI Agents Affect Malaysia’s Finance Field by 2026? will note that regulatory bodies are heavily emphasizing biometric liveness detection and multi-factor authentication to combat the surge in AI-generated fraud. Whether it is an automated voice calling a retail banking customer or a synthetic executive ordering a multi-million-dollar transfer, the attack vector remains fundamentally the same.

Strategies for Mitigating Deepfake Security Risks in 2026

Defending against this onslaught requires a combination of technological upgrades and procedural overhauls. Relying solely on employee awareness training is no longer sufficient when the deceptive media is indistinguishable from reality. Organizations are implementing strict countermeasures to address Deepfake Security Risks in 2026, including:

- Cryptographic Verification: Mandating digital watermarks or cryptographic signatures for all internal executive communications, ensuring that voice and video calls are authenticated at the network level before they even reach the recipient.

- Out-of-Band Authentication: Requiring employees to verify urgent financial requests through an independent channel, such as a pre-approved internal messaging system, rather than responding to the caller directly.

- Behavioral Analytics: Deploying AI-driven security tools that monitor network traffic and communication patterns for anomalies, identifying the subtle digital footprints of deepfake generation tools.

- Safe Words and Challenge Questions: Establishing internal corporate safe words that executives must provide when authorizing high-value transactions remotely.

As the barrier to entry for generating convincing synthetic media drops to near zero, the volume and sophistication of voice cloning scams will only multiply. Addressing Deepfake Security Risks in 2026 demands that financial institutions operate under a strict zero-trust audio policy, where voices must be authenticated just as rigorously as passwords. Understanding these sophisticated attack methods naturally leads us to explore the broader compliance frameworks and enterprise defense strategies required to safeguard organizational integrity in the next era of digital security.

Corporate Identity Theft and Social Engineering

As we delve deeper into the evolving cyber threat landscape, we must address the alarming rise in corporate identity theft and targeted manipulation. The rapid proliferation of artificial intelligence has armed threat actors with unprecedented capabilities to deceive even the most vigilant professionals. Among the most critical concerns for modern enterprises are the Deepfake Security Risks in 2026. Fraudsters no longer rely solely on rudimentary phishing emails or misspelled domains; instead, they clone executive voices and synthesize high-definition video appearances to bypass conventional authentication layers entirely.

In the modern corporate sphere, digital identity theft is scaling exponentially, fueled by easily accessible machine learning algorithms. The convergence of targeted social engineering with hyper-realistic media has made it incredibly difficult for employees to verify exactly who is on the other end of a phone call, a voicemail, or a virtual meeting. Addressing the Deepfake Security Risks in 2026 requires more than just updated firewalls and endpoint protection; it demands a total overhaul of the human element in cyber defense and a fundamental shift in how trust is established within digital communication channels.

The Next Level of Business Email Compromise

Traditional Business Email Compromise (BEC) has officially evolved into Business Identity Compromise. In these advanced and highly lucrative attacks, cybercriminals seamlessly insert synthetic audio and video into live corporate communications. For instance, a finance manager might receive a voice memo from the CEO demanding an immediate and highly confidential wire transfer, complete with the executive’s precise vocal intonations, speech cadence, and even simulated background noise. Mitigating Deepfake Security Risks in 2026 depends heavily on recognizing these sophisticated audio and video spoofing mechanisms before unauthorized transactions are approved.

As organizations integrate new communication technologies and expand their remote workforce, leadership and employee verification becomes paramount. For a practical perspective on building resilient teams and verifying managerial identities during organizational growth, leaders can explore How to Understand SME HR for New IT CEOs in Singapore 2026?. By embedding stringent human resources verification protocols alongside advanced IT security frameworks, enterprises can detect fraudulent identities before they gain internal access. Industry experts repeatedly emphasize that navigating Deepfake Security Risks in 2026 hinges upon establishing robust, multi-factor identity validation both during the initial hiring process and throughout daily corporate operations.

Hyper-Realistic Phishing and Synthetic Personas

Another profound dimension of corporate identity theft and social engineering involves the creation of entirely synthetic personas. Rather than merely impersonating an existing executive, threat actors now construct completely fake employee profiles—complete with AI-generated headshots, forged academic credentials, and cloned voices—to infiltrate corporate networks as freelance contractors or remote workers. This tactic fundamentally alters the traditional threat model. Managing Deepfake Security Risks in 2026 means security teams must proactively deploy real-time biometric liveness detection and behavioral analytics to catch these digital anomalies before they exfiltrate sensitive data.

To fully understand the gravity of these emerging attack vectors, it is highly beneficial to consult leading threat intelligence resources. For example, comprehensive breakdowns of how deepfake attacks operate reveal that modern attackers meticulously combine AI forgeries with deep psychological manipulation. When employees are pressed with false urgency by a seemingly authentic executive video conference, traditional defense mechanisms often fail. To counteract this, enterprises must implement the following strategies:

- Mandatory cryptographic verification for all internal fund transfer requests.

- Continuous simulations that train staff to recognize micro-glitches in AI-generated video and audio.

- Implementation of internal “safe words” or out-of-band communication channels for high-stakes business decisions.

- Integration of advanced threat detection tools specifically designed to analyze media metadata and detect synthetic interference.

Without these proactive measures, the psychological leverage applied by attackers remains dangerously effective. Ultimately, continuous employee education and adaptive security policies are urgently required to neutralize the Deepfake Security Risks in 2026.

The sheer complexity of defending against these next-generation targeted attack methods naturally leads us to explore the broader compliance frameworks and enterprise defense strategies required to safeguard organizational integrity in the next era of digital security.

Political Manipulation and Misinformation Campaigns

While adopting robust compliance frameworks and enterprise defense strategies is critical to safeguarding organizational integrity against targeted attack methods, we must also understand the macro-level geopolitical forces driving these technologies. The external environment is facing a profound crisis, and analyzing Deepfake Security Risks in 2026 is essential to comprehending the unprecedented scale of political manipulation and misinformation campaigns today. Advanced artificial intelligence no longer simply blurs the line between fact and fiction; it has actively weaponized deception to destabilize democratic processes, creating collateral damage that impacts public institutions and private enterprises alike.

The Weaponization of Synthetic Media in Global Elections

As nations worldwide prepare for closely contested elections, the deployment of hyper-realistic digital forgeries has become a specialized attack vector. Deepfake Security Risks in 2026 are primarily characterized by the alarming speed at which fabricated audio and video clips can be generated and distributed. In previous election cycles, bad actors utilized rudimentary bots to amplify divisive rhetoric. Today, the landscape involves sophisticated cognitive manipulation, where deepfakes are precision-targeted to achieve specific malicious outcomes:

- Suppressing voter turnout: Broadcasting fabricated messages about polling location closures or localized security threats.

- Character assassination: Falsely attributing highly controversial and polarizing statements to key political candidates.

- Manufacturing crises: Simulating catastrophic geopolitical events or financial scandals merely days before citizens head to the polls.

According to risk forecasts from institutions like the World Economic Forum, AI-driven disinformation ranks among the most severe global threats, severely degrading the information ecosystem. The sheer volume of synthetic content creates a shadow economy of election interference, making Deepfake Security Risks in 2026 a primary concern for international election watchdogs.

Cognitive Warfare and the Erosion of Public Trust

Beyond direct electoral interference, the continuous barrage of misinformation leads to a more insidious outcome: the complete erosion of public trust. When assessing Deepfake Security Risks in 2026, psychologists and security experts point to the “liar’s dividend.” This phenomenon occurs when the public becomes so accustomed to the pervasive presence of deepfakes that actual, authentic evidence of misconduct is easily dismissed as AI-generated. Deepfake Security Risks in 2026 extend beyond the fake content itself; they encompass the resulting societal paralysis where objective truth becomes entirely subjective. Citizens constantly exposed to manipulated media experience severe cognitive fatigue, making them increasingly susceptible to confirmation bias. The strategic objective of many misinformation campaigns is not necessarily to convince the public of a specific lie, but rather to exhaust their capacity to discern the truth at all.

Geopolitical Ramifications and Nation-State Threat Actors

The implications of these campaigns naturally cross international borders, involving well-funded nation-state threat actors. These state-sponsored syndicates employ deepfakes as a core pillar of modern cyber warfare and espionage. When analyzing the global job market’s response, evaluating reports such as the Tìm gì ở báo cáo xu hướng nhu cầu thị trường CNTT VN 2026 provides insight into the surging demand for IT cybersecurity professionals trained specifically to combat these AI-driven geopolitical threats. Governments and defense agencies are aggressively recruiting talent capable of executing a systematic defense protocol:

- Reverse-engineering synthetic media algorithms to identify the foundational AI models used.

- Tracing the digital distribution networks back to foreign intelligence units.

- Deploying rapid counter-measures to neutralize the spread of manipulated content across social platforms.

Furthermore, examining Deepfake Security Risks in 2026 reveals that diplomatic relations are highly vulnerable to synthetic audio claiming political hostilities or false trade embargoes, creating friction where none truly exists.

Ultimately, addressing Deepfake Security Risks in 2026 requires understanding that political manipulation is merely a testing ground. The tactics perfected by state actors to disrupt elections and society are rapidly being commodified and sold to cybercriminal cartels. As nation-states and malicious syndicates refine these manipulation tactics on the global stage, they simultaneously adapt them for targeted corporate espionage and financial fraud. This escalating threat landscape necessitates a closer look at the specific regulatory responses and advanced technological countermeasures that will define our digital survival in the coming years.

The Impact on Biometric Authentication Systems

The escalating threat landscape necessitates a closer look at the specific regulatory responses and advanced technological countermeasures that will define our digital survival in the coming years. Central to this discussion is the profound disruption of verification methods we once considered virtually infallible. Understanding the full scope of Deepfake Security Risks in 2026 requires an in-depth examination of how artificially generated media is systematically undermining biometric authentication frameworks across the globe. From banking to enterprise access, the assumption that a fingerprint, voiceprint, or facial scan indisputably proves human identity is being aggressively challenged by highly scalable synthetic media tools.

The Erosion of Trust in Liveness Detection

For years, liveness detection mechanisms—which prompt users to blink, smile, or turn their heads—served as a robust barrier against spoofing attacks. However, one of the most alarming Deepfake Security Risks in 2026 involves the direct bypass of these exact liveness checks. Threat actors now utilize real-time deepfake models that can instantly map a targeted individual’s facial features onto an attacker’s movements. This allows the cybercriminal to nod, speak, and interact naturally on camera, tricking the security protocols into registering a live, authorized user. As highlighted in Brilliance Security Magazine’s analysis on securing MFA, the barrier to entry has lowered drastically, enabling fraudsters to deceive facial recognition and voice authentication systems with synthetic images or cloned audio. The reality is that standard single-signal biometric defenses are no longer sufficient to secure sensitive digital environments.

Corporate Identity and HR Vulnerabilities

The corporate sector, particularly human resources and talent acquisition, is bearing a significant portion of this impact. Evaluating the broader Deepfake Security Risks in 2026 highlights a critical vulnerability within remote hiring and onboarding operations. Threat actors have successfully applied for remote roles using deepfake personas, passing multiple rounds of video interviews and background checks undetected. As businesses expand globally and explore international talent strategies—often evaluating questions like Is HR Outsourcing Vietnam Right for Your Business in 2026?—the necessity for robust, fraud-resistant remote onboarding protocols becomes paramount. Without continuous, multi-layered identity intelligence, organizations risk granting insider access to sophisticated state-sponsored hackers or cybercriminal syndicates who can operate under the guise of legitimate employees.

Advanced Injection Attacks and Synthetic Identities

Beyond live impersonation, attackers are increasingly relying on camera injection techniques. In the context of Deepfake Security Risks in 2026, virtual camera injections represent a highly sophisticated threat vector. Instead of holding a screen up to a webcam, bad actors use specialized software to bypass the physical camera entirely, directly injecting a pre-recorded or dynamically generated deepfake video stream into the application’s verification API. Because the system believes the video feed is originating from the device’s native hardware, the manipulated media easily passes initial security validations.

Furthermore, these techniques are often paired with the creation of synthetic identities. By amalgamating stolen biometric data points from various individuals—such as combining one person’s eyes with another’s jawline and a third person’s voice—attackers forge entirely new personas that do not exist in the real world. To effectively counter Deepfake Security Risks in 2026, organizations must pivot toward comprehensive biometric orchestration platforms. These platforms move beyond static checks, analyzing behavioral biometrics, device intelligence, and network anomalies simultaneously.

Ultimately, navigating Deepfake Security Risks in 2026 demands a shift from one-time authentication to continuous verification. As synthetic media continues to blur the lines between reality and fabrication, securing our digital identities will require an unprecedented level of real-time, AI-driven scrutiny. This ongoing evolution in authentication technology seamlessly paves the way for understanding how industries are actively fighting back against these deceptive forces.

Emerging Deepfake Detection Technology and Tools

As cybercriminals continuously refine their manipulation tactics, organizations worldwide are aggressively adopting sophisticated countermeasures to safeguard their digital assets. The escalating Deepfake Security Risks in 2026 demand more than just basic cybersecurity awareness; they require a proactive deployment of multi-layered, AI-driven identification systems. Recognizing the profound financial and reputational damages that synthetic media can inflict, technology developers have accelerated the release of forensic-grade platforms designed specifically to analyze and mitigate Deepfake Security Risks in 2026. These advanced defense mechanisms ensure that trust remains intact across corporate communications, public relations, and internal human resources operations.

AI-Powered Multi-Modal Verification Systems

The latest generation of detection software operates on a multi-modal framework, scrutinizing audio, video, and text simultaneously rather than relying on a single vulnerability point. Leading solutions leverage advanced neural networks to flag pixel-level distortions, unnatural lighting reflections, and imperceptible frame-to-frame inconsistencies. Tools like Reality Defender and Sensity AI have become critical enterprise assets, offering robust capabilities that analyze file structures and metadata in real time. Sensity AI, for instance, provides multilayer forensic analysis that delivers court-ready reports, neutralizing Deepfake Security Risks in 2026 before malicious media can infiltrate corporate or government networks.

To fully optimize these detection platforms, modern systems integrate several core functionalities:

- Biometric Integrity Checks: Continuous scanning of natural human movements, such as micro-expressions and blinking patterns.

- Acoustic Signature Analysis: Detection of synthesized speech artifacts and irregularities in breathing rhythms.

- Metadata Forensics: Deep inspection of file origin points, compression histories, and underlying code alterations.

Managing these sophisticated software suites naturally requires specialized technical talent. As companies build their internal security protocols to handle Deepfake Security Risks in 2026, they must ensure they have the right workforce in place. Partnering with an expert hr consulting firm – Shelby Global can help technology leaders and human resources departments recruit cybersecurity professionals trained specifically in forensic media analysis and threat intelligence.

Real-Time Audio and Video Surveillance

Audio impersonation has recently emerged as one of the most prevalent attack vectors, often used to bypass knowledge-based authentication or to authorize fraudulent wire transfers. To counter this, real-time monitoring tools such as McAfee Deepfake Detector and Pindrop Pulse have been integrated directly into communication channels. These platforms continuously evaluate behavioral call signals to assign authenticity scores instantly. By immediately flagging synthetic speech patterns, these defensive tools minimize Deepfake Security Risks in 2026 during high-stakes corporate communications and virtual board meetings.

In addition to audio monitoring, modern browser extensions and endpoint security clients now warn users within seconds if a video stream exhibits signs of AI generation. By operating seamlessly in the background without requiring extra user clicks, these solutions dramatically reduce the margin for human error. Addressing Deepfake Security Risks in 2026 relies heavily on this continuous, automated vigilance to protect employees who may not possess advanced technical expertise but are still frequent targets of social engineering campaigns.

The Continuous Cycle of Discovery and Disruption

Ultimately, modern detection technology is not just about identifying a singular forgery; it encompasses a comprehensive lifecycle of discovery, validation, and active disruption. Advanced threat intelligence platforms continuously scan social media feeds, domain registries, and dark web forums to uncover circulating synthetic media variants before they gain viral traction. When an organization effectively anticipates Deepfake Security Risks in 2026, they can swiftly initiate automated takedown capabilities to halt the spread of damaging and deceptive campaigns.

As detection algorithms grow increasingly intelligent, they form a vital bulwark against sophisticated identity deception. However, technology alone cannot entirely eliminate the broader threat landscape. A holistic approach demands that these emerging tools be paired with rigorous internal policy enforcement and strategic operational oversight. Transitioning from purely technological defense to enterprise-wide governance is the next critical step in ensuring resilient operations against tomorrow’s digital frauds.

Regulatory Responses and New AI Legislation

As the technological landscape rapidly evolves, governments and international regulatory bodies are scrambling to establish frameworks capable of mitigating Deepfake Security Risks in 2026. The sheer scale of synthetic media manipulation has transcended corporate boundaries, prompting legislative action on a global scale. In response to the escalating threats, lawmakers are transitioning from advisory guidelines to strictly enforced compliance mandates. Understanding these new laws is no longer a niche legal concern but a fundamental requirement for business continuity and risk management.

The European Union’s Pioneering AI Act

The most comprehensive legislative framework addressing Deepfake Security Risks in 2026 is the European Union’s Artificial Intelligence Act, which sees full enforcement of its transparency rules by mid-2026. Under Article 50 of the AI Act, there are strict obligations for transparency when deploying generative systems. The law dictates that any AI-generated or manipulated image, audio, or video that constitutes a deepfake must be explicitly disclosed as artificially created.

Key pillars of the EU’s approach include:

- Mandatory Disclosure: Creators and deployers must embed machine-readable labels in synthetic media, ensuring that the origins of the content can be traced and verified.

- Risk Categorization: AI tools designed for biometric categorization or emotion recognition are subjected to extreme scrutiny or outright bans if deemed an unacceptable risk to human rights.

- Severe Penalties: Failure to comply with these transparency obligations carries heavy fines, forcing enterprises to invest heavily in deepfake detection infrastructure.

For more detailed insights on how these European regulations impact content moderation, industry leaders are studying what the EU’s new AI Code of Practice means for labeling deepfakes, which outlines the interplay between the AI Act and the Digital Services Act (DSA).

Global Regulatory Convergence and Sector-Specific Rules

While Europe leads the legislative charge, other regions are actively adopting similar strategies to combat Deepfake Security Risks in 2026. In the United States, federal agencies and state governments have enacted disjointed but increasingly stringent laws aimed at preventing AI-driven fraud and electoral interference. Corporate entities across sectors like finance, supply chain, and logistics are finding themselves at the intersection of these new compliance standards.

This evolving regulatory environment has a profound effect on operational strategies. For instance, global trade compliance and hiring practices must now factor in identity verification mandates. If you are exploring how automation intersects with these regional shifts, our guide on How AI Agents Affect Logistics in Malaysia by 2026? provides excellent context on adapting to technology-driven policy changes.

To navigate the complexities of Deepfake Security Risks in 2026, organizations must adopt a proactive legal posture. The compliance requirements demand that companies not only update their internal acceptable use policies but also rigorously audit their third-party vendors’ AI practices. Furthermore, regulatory bodies will increasingly demand proof of security by design, meaning organizations must demonstrate that they have integrated deepfake mitigation controls directly into their IT infrastructure.

The Impact on Enterprise Liability

The most profound shift brought about by these new laws is the redefinition of corporate liability. When evaluating Deepfake Security Risks in 2026, business leaders must recognize that falling victim to a deepfake scam (such as an unauthorized wire transfer approved by a synthetic voice) could also result in regulatory fines if the company failed to implement mandated verification protocols. The burden of proof now rests on the enterprise to show that they took reasonable, legally defined steps to authenticate communications.

By enforcing strict documentation, labeling, and reporting standards, the new AI legislation aims to create a transparent digital ecosystem. However, legislation alone is a delayed reactive measure. As we prepare to combat Deepfake Security Risks in 2026, relying solely on compliance is insufficient to prevent sophisticated attacks. Organizations must marry these legal requirements with robust internal defense mechanisms. This leads us to the crucial need for practical, enterprise-grade mitigation strategies and advanced employee training programs that can stop these hyper-realistic threats at the front door.

Best Practices for Corporate Cybersecurity Defense

As organizations grapple with the escalating volume of synthetic media, building a robust defense mechanism is no longer optional. The landscape of Deepfake Security Risks in 2026 demands a proactive, comprehensive strategy that integrates advanced technology with human intuition. Corporate cybersecurity frameworks must evolve beyond traditional firewalls and perimeter defenses, as deepfakes target the human element—exploiting trust rather than technical vulnerabilities. To effectively mitigate Deepfake Security Risks in 2026, companies need to adopt a holistic approach that encompasses rigorous verification protocols, continuous education, and cutting-edge detection tools.

Implement Multi-Layered Authentication and Zero-Trust Architectures

The first line of defense against modern impersonation attacks is eliminating implicit trust. The traditional paradigm of verifying a user once and granting broad access is highly vulnerable to Deepfake Security Risks in 2026. Instead, organizations must implement a strict Zero-Trust architecture. In a Zero-Trust model, every access request is thoroughly vetted, regardless of whether it originates from inside or outside the corporate network. Overcoming Deepfake Security Risks in 2026 requires making verification an unskippable, frictionless part of daily corporate operations through specific strategies:

- Continuous Verification: Eliminate implicit trust by requiring recurring authentication for sensitive data access, especially during high-stakes financial operations.

- Secondary Communication Channels: If an employee receives a video call from the CEO requesting an emergency fund transfer, protocol should dictate a follow-up verification via a secure internal messaging app or a predetermined offline code word.

- Biometric Liveness Detection: Multi-factor authentication (MFA) must incorporate advanced checks—such as analyzing micro-expressions or real-time pulse rates—to ensure the person on the other end is a living human, not a digitally rendered clone.

Conduct Scenario-Based Employee Training and Red Teaming

Technology alone cannot thwart an attack if the human target remains unaware. Therefore, continuous, scenario-based employee training is a critical pillar of corporate defense. Standard phishing simulations are no longer sufficient; security teams must conduct “red teaming” exercises that actively deploy benign deepfakes against their own workforce to test readiness. By exposing employees to synthetic voice clones of their actual managers or AI-generated video meetings, staff can learn to identify subtle inconsistencies, such as unnatural blinking, glitching backgrounds, or audio-visual desynchronization.

Furthermore, as AI ecosystems expand regionally, understanding the broader tech landscape is vital for threat intelligence. For example, business leaders analyzing How AI Agent Effect to Technology Field in Malaysia by 2026? can draw parallels to how automated agents might be weaponized in social engineering campaigns across Southeast Asia. Educated employees who understand the underlying mechanics of these localized AI trends are far better equipped to recognize and report suspicious interactions, drastically reducing the success rate of Deepfake Security Risks in 2026.

Leverage AI-Powered Threat Detection Tools

Fighting artificial intelligence requires artificial intelligence. To combat the sophisticated Deepfake Security Risks in 2026, corporations must integrate AI-powered threat detection tools directly into their communication platforms. These tools analyze audio and video streams in real time, searching for algorithmic artifacts, pixel manipulation, and unnatural frequency patterns invisible to the naked eye. Deploying these AI defenses typically follows a phased approach:

- Assessment: Identify which communication channels (e.g., video calls, voicemails, executive emails) are most vulnerable to synthetic media attacks.

- Integration: Embed counter-GAN (Generative Adversarial Network) technology into existing corporate video conferencing and enterprise network gateways.

- Monitoring: Continuously update the detection models to recognize the latest and most advanced deepfake generation techniques.

High-authority institutions have repeatedly warned about this technological arms race. According to the World Economic Forum’s Global Risks Report, AI-driven misinformation and disinformation constitute one of the most severe short-term threats globally. To stay ahead, enterprise security suites are now embedding these automated systems to immediately flag a suspected deepfake with a visual warning before an employee can comply with a fraudulent request.

By combining Zero-Trust protocols, immersive training, and AI-driven detection mechanisms, businesses can construct a formidable barrier against synthetic media threats. Managing Deepfake Security Risks in 2026 is an ongoing commitment to resilience. As we finalize our understanding of these defensive postures, it is essential to look forward and anticipate what the next generation of synthetic threats will entail, guiding us to the final verdict on the future of digital trust.

Conclusion

As we summarize our comprehensive analysis, it is increasingly obvious that managing Deepfake Security Risks in 2026 is no longer an optional endeavor for modern enterprises. Synthetic media has transitioned from a theoretical concept to an accessible, weaponized tool used by cybercriminals worldwide. We are witnessing a paradigm shift where visual and auditory evidence, once considered the gold standard for authentication, can be entirely fabricated. This reality forces executives, security teams, and individual users to reevaluate how they establish and maintain digital trust.

The convergence of Generative Adversarial Networks (GANs) and sophisticated social engineering tactics has created an environment where traditional security perimeters are virtually obsolete. To properly mitigate Deepfake Security Risks in 2026, organizations must deploy continuous, AI-driven authentication mechanisms. Relying solely on legacy verification protocols leaves companies exposed to devastating financial losses, reputational damage, and severe operational disruptions. By recognizing the scale of the threat, leaders can begin implementing the resilient frameworks necessary for survival.

Strategic Preparation for Upcoming Vulnerabilities

Industry experts consistently emphasize that evaluating Deepfake Security Risks in 2026 requires more than just reactive policies; it demands a proactive overhaul of an organization’s digital infrastructure. Cybercriminals are now capable of executing live-feed video manipulations and voice cloning during high-stakes executive meetings. Consequently, businesses must adopt multi-layered defenses, which should ideally include:

- Cryptographic watermarking for internal and external corporate communications.

- Real-time biometric analysis and continuous monitoring during remote authentication.

- Advanced liveness detection systems to instantly verify human presence.

Collaboration and threat intelligence sharing are equally critical. In fact, a recent deep dive by Cyble into deepfake cybersecurity solutions [1] points out that the financial toll of synthetic media attacks is pushing organizations to invest heavily in AI-powered defense tools. Security teams are urged to participate in simulated attack exercises that mimic realistic executive impersonations. By exposing employees to these synthetic threats in a controlled environment, companies can build a formidable human firewall, which is often the last line of defense when technological barriers fail.

Sector-Specific Impacts and Human Resources

The evolving landscape of Deepfake Security Risks in 2026 also intersects heavily with regional and sector-specific vulnerabilities. Industries handling massive volumes of financial transactions, proprietary data, and sensitive client information are the primary targets. Furthermore, human resources and supply chain operations are experiencing unprecedented pressure. Malicious actors are deploying synthetic identities to bypass automated screening tools and vendor verification processes, leading to severe internal breaches.

For instance, leaders assessing regional operations are discovering how pervasive these threats have become. The insights found within the Trend Report of FMCG Risk in Singapore 2026 highlight the expanding surface area for corporate espionage and identity fraud in fast-moving consumer goods and logistics networks. When addressing Deepfake Security Risks in 2026, HR and vendor management teams find themselves on the front lines. They must integrate deepfake detection directly into their candidate and partner onboarding workflows to ensure that malicious actors do not infiltrate the company from within.

The Final Verdict on Digital Trust

Ultimately, surviving Deepfake Security Risks in 2026 will depend on an organization’s ability to evolve alongside the technology. The rapid democratization of artificial intelligence means that the barrier to entry for cybercriminals will only continue to lower. However, by leveraging equally sophisticated defensive AI, organizations can detect anomalies and neutralize synthetic media before it causes harm. To sustain resilience against these ongoing threats, security leaders should adopt a three-step methodology:

- Foster a culture of rigorous skepticism and continuous employee education regarding synthetic media.

- Enforce a strict zero-trust architecture across all internal and external communication platforms.

- Commit to integrating advanced threat intelligence and real-time scanning into daily operations.

In this new digital era, trust must be earned, continuously verified, and cryptographically secured. The future of enterprise security relies on acknowledging that our eyes and ears can be deceived. By prioritizing proactive awareness and deploying robust technical countermeasures, businesses can confidently navigate the treacherous waters of synthetic media, safeguarding their critical assets, their hard-earned reputation, and the fundamental integrity of their digital communications for years to come.

Headhunt Consultants APAC: Your Partner in Talent Acquisition and Growth

Are you on the lookout for top talent to drive your business forward? Look no further than Headhunt Consultancy APAC! We are a premier Headhunting Company in APAC dedicated to connecting businesses with skilled professionals who can meet their unique needs and propel their success. Finding the right people for your business can be tricky, but we’re here to help!↳

At Headhunt Consultancy in APAC, we’re experts at connecting great companies with talented folks like you. Whether you’re a big company or just starting out, we’re ready to find the perfect match for you.

We work closely with you to understand exactly what you need, and we have lots of amazing people in our network to choose from. Plus, we’ve teamed up with ShelbyGlobal to offer even more help with things like payroll and HR.

So if you’re ready to take your team to the next level, we’re here to make it happen. Let’s find the perfect fit together! Contact Us